Calculate gradients of model outputs with respect

to inputs using native torch autograd. This function provides a lightweight

alternative to run_grad and Gradient that works

directly with any torch::nn_module objects without requiring model

conversion.

torch_grad(

model,

data,

output_idx = NULL,

times_input = FALSE,

dtype = "float",

return_object = FALSE

)Arguments

- model

(

nn_module)

A torch model. Must be an instance ofnn_module.- data

(

torch_tensor,array, ormatrix)

Input data for which to calculate gradients. If not already a torch tensor, it will be converted automatically. Expected shape:(batch_size, ...)where...represents the input dimensions.- output_idx

(

integer)

Index or indices of output nodes for which to calculate gradients. If the model outputs a tensor of shape(batch_size, n_outputs), use indices 1 to n_outputs. Default:NULL(all outputs).- times_input

(

logical(1))

IfTRUE, multiplies the gradients by the input values (Gradient×Input method). Default:FALSE(Vanilla Gradient).- dtype

(

character(1))

Data type for calculations. Either"float"fortorch_floator"double"fortorch_double. Default:"float".- return_object

(

logical(1))

IfTRUE, returns aInterpretingMethodobject with methods likeplot()andget_result(). IfFALSE(default), returns a rawtorch_tensor.

Value

If return_object = FALSE (default): A torch_tensor

containing the gradients with shape (batch_size, ..., n_outputs).

If return_object = TRUE: A InterpretingMethod object.

Details

This function computes the gradients of the outputs with respect to the input variables, i.e., for all input variable \(i\) and output class \(j\): $$d f(x)_j / d x_i$$

If times_input = TRUE, the gradients are multiplied by the respective

input value (Gradient×Input):

$$x_i * d f(x)_j / d x_i$$

While vanilla gradients emphasize prediction-sensitive features, Gradient×Input provides a decomposition of the output into feature-wise effects based on the first-order Taylor decomposition.

Performance

This function is typically faster and more memory-efficient than

run_grad because it:

Avoids model conversion overhead

Uses native torch autograd directly

Comparison with run_grad

torch_grad is recommended when:

Working with non-sequential torch models

Working with torch models exclusively

Performance is critical

Use run_grad when:

Working with keras or neuralnet models

You need other attribution methods (LRP, DeepLift, etc.)

See also

Other direct torch methods:

torch_expgrad(),

torch_intgrad(),

torch_smoothgrad()

Examples

library(torch)

# Create a simple model

model <- nn_sequential(

nn_linear(10, 50),

nn_relu(),

nn_linear(50, 3)

)

# Generate some data

data <- torch_randn(5, 10)

# Calculate vanilla gradients

grads <- torch_grad(model, data)

# Calculate Gradient×Input

grads_times_input <- torch_grad(model, data, times_input = TRUE)

# Calculate gradients for specific output

grads_class1 <- torch_grad(model, data, output_idx = 1)

# Get result as innsight object with plot() support

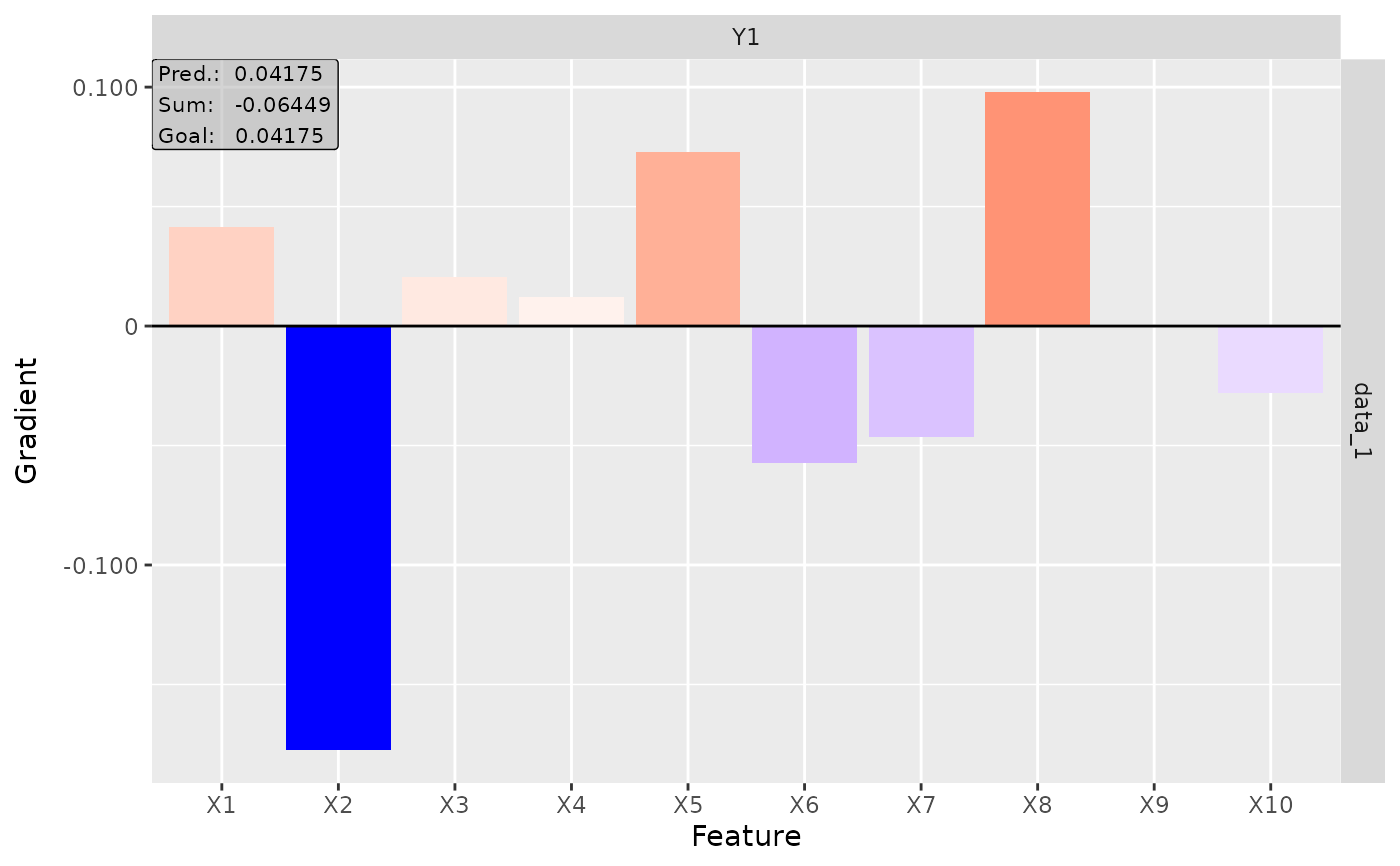

result <- torch_grad(model, data, return_object = TRUE)

plot(result)